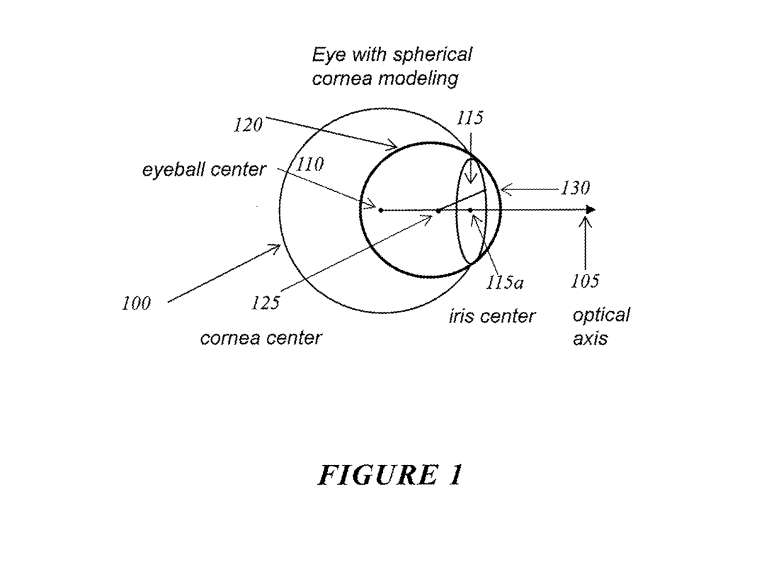

《Apple专利|用于头部到躯干方向的空间音频再现》描绘了一种基于头部取向数据来确定头部到源取向和头部到躯干取向的方法,通过这种方法可以确定头部到躯干的方向,进而应用于双耳音频滤波器来产生音频输出信号,从而实现准确的空间音频再现。《Apple专利|用于基于运动的色彩校正电子设备》披露了一种基于照明条件和检测到的运动来自动校正电子设备颜色的方法,其中颜色适应速度可根据照明条件和检测到的运动量进行调整。《Apple专利|用于透视显示内容的动态亮度范围》展示了一种动态调整头戴式设备透视显示内容亮度范围的方法,该方法根据用户对显示器的适应程度来动态调整最大允许亮度,以实现透视显示内容的更好展示效果。《Samsung专利|显示设备》描述了一种具有栅电极和电容器的显示设备,其中栅电极设置在有源图案上,电容器设置在栅电极上形成电容器单元。以上是其中一些AR/VR专利的摘要和要点。三星专利揭示了一种像素电路和显示设备,其中包括发光元件、第一晶体管和第一电容器等组件,以及响应写入栅极信号的第二晶体管。此外,该专利还介绍了一种显示设备和驱动方法,通过使用近红外光、传感器像素和眼睛特征模型实现眼睛追踪和高分辨率显示。另一方面,该专利还介绍了一种亚像素和显示设备,其中包括发光元件、第一晶体管和第二晶体管。此外,索尼专利涵盖了导光板、信息处理设备、点云压缩和头戴式显示器等技术。Snap的专利则涵盖了眼动追踪校准、增强现实内容排名和基于生成模型输出的XR体验等技术。谷歌专利涉及到反射波导、眼内显示、图像校准和基于虚拟现实的购物等领域。最后,微软专利涉及到移动平台追踪、深度图生成和动态环境信息智能放置等技术。这些专利反映了XR行业的创新和发展趋势。1. "Snap Patent | Projecting images in augmented reality environments based on visual markers" describes a method for projecting images in augmented reality based on visual markers. It involves using a digital image sensor on a computing device to detect visual markers and determine their position and boundaries, and then display the images on a digital display, creating the illusion of the images being projected onto the physical space.

2. "Snap Patent | Texture generation using multimodal embeddings" describes a method for generating texture in augmented reality. It involves storing interactive data representing one or more interactions in a multimodal memory, detecting objects depicted in images captured by an interactive client, generating prompts based on the objects and the stored interactive data, generating artificial texture based on the prompts, and modifying the texture of the objects depicted in the images based on the artificial texture.

3. "Snap Patent | Product image generation based on diffusion model" describes a system for generating product images based on a diffusion model. It involves receiving images depicting real-world objects, generating prompts that include textual descriptions of fashion items, analyzing the images and textual descriptions using a generative machine learning model, generating artificial images depicting fashion items that match the textual descriptions, and replacing the artificial fashion items with real-world product images that match the visual attributes of the artificial fashion items.

4. "Snap Patent | Scannable codes as landmarks for augmented-reality content" describes a method for generating and displaying augmented-reality content based on scannable codes. It involves generating a scan request on a client device, determining the position and orientation of the encoded image in 3D space, defining a reference point on the client device based on the encoded image, accessing media content from a media library based on the reference point, and displaying the media content on the client device based on the reference point.

5. "Snap Patent | Ingestion pipeline for generating augmented reality content generators" describes a pipeline for generating augmented reality content generators. It involves generating a 3D model file of a product, converting the 3D model file to a 3D object file, associating the 3D object file with a product catalog service, and publishing the augmented reality content generator associated with the product.

6. "Snap Patent | 3D content display using head-wearable apparatuses" describes a method for displaying 3D content using head-wearable apparatuses. It involves determining the position of content items that are closest to the curves of the head-wearable apparatus, adjusting the shape of the content items based on the position of the content items and the user's viewpoint, and displaying the adjusted content items on the display of the head-wearable apparatus.

7. "Snap Patent | Alignment of augmented reality components with the physical world" describes a system for aligning augmented reality components with the physical world. It involves obtaining surface plane information, detecting edges in an image, and presenting virtual content with orientation and position based on the edges and the surface plane information.

8. "Snap Patent | Avatar call platform" describes a platform for generating and displaying animated avatars of callers during a call. The facial expressions of the animated avatars match the expressions of the callers during the call.

9. "Qualcomm Patent | Receiver side prediction of encoding selection data for video encoding" describes a method for encoding and decoding video data. It involves receiving encoding selection data indicating the encoding scheme for a third picture based on the encoding scheme used for a second picture, and encoding the second picture based on the encoding selection data.

10. "Qualcomm Patent | Receiver selected decimation scheme for video coding" describes a method for encoding and decoding video data. It involves receiving a decimation mode indicator indicating a decimation mode for a second group of pictures based on the decimation mode used for a first group of pictures, and encoding the second group of pictures based on the decimation mode.

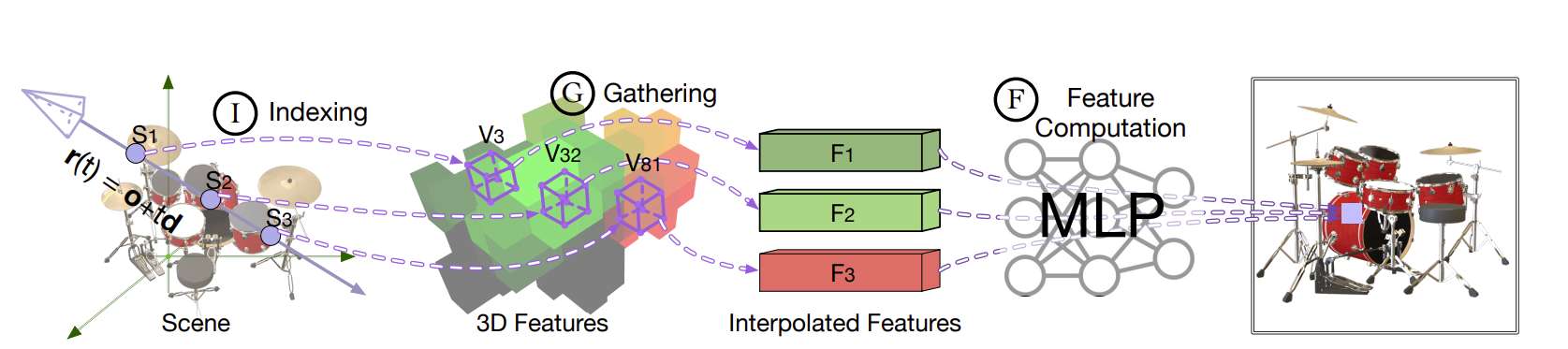

11. "Qualcomm Patent | V-dmc displacement vector integer quantization" describes a method for decoding encoded grid data. It involves determining a set of coefficients based on the encoded grid data, applying inverse scaling to the coefficients using integer arithmetic to obtain a set of quantized coefficients, determining a displacement vector based on the quantized coefficients, deforming a base grid based on the displacement vector, and outputting the decoded grid.

12. "Qualcomm Patent | Distributed video coding using reliability data" describes a method for encoding and decoding video data. It involves generating prediction data for pictures based on previous reconstructed pictures, generating encoded video data based on the prediction data, scaling the coefficients of transform blocks of the encoded video data based on reliability values, generating error correction data based on the encoded video data, and reconstructing pictures based on the error correction data.

13. "Qualcomm Patent | Entropy continuation and dependent frame entropy coding in point cloud compression" describes a method for compressing point cloud data. It involves sending or parsing a slice level flag indicating the entropy coding status of the first slice of a current frame, and encoding or decoding the first slice based on the entropy coding status indicated by the slice level flag.

14. "Qualcomm Patent | Companion device assisted multi-view video coding" describes a method for encoding and decoding multi-view video data. It involves generating a first group of pictures based on a first set of view pictures, sending the first encoded video data to a receiving device, receiving a multi-view encoding hint from the receiving device, generating a second group of pictures based on a second set of view pictures, encoding the second group of pictures based on the multi-view encoding hint, and sending the second encoded video data to the receiving device.

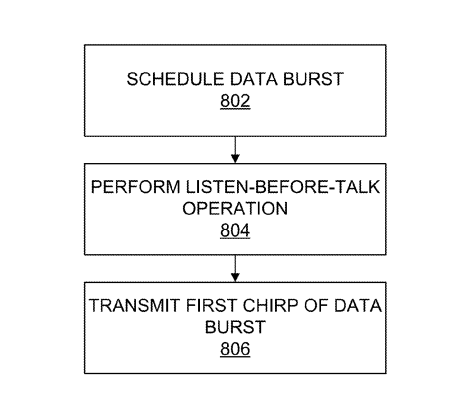

15. "Qualcomm Patent | Channel estimation based on samples of downlink transmission" describes a method for estimating the channel state based on downlink transmission samples. It involves sending a first downlink transmission and receiving uplink transmission including channel state information for the first downlink transmission. Based on the channel state information, a second downlink transmission is sent.

16. "Qualcomm Patent | Pose optimization for object tracking" describes a method for optimizing the pose of an object. It involves obtaining the pose of the object in a world coordinate system, obtaining an image of the object from a camera position, obtaining a transformation from the world to the camera position, determining reprojection error based on the pose, image, and transformation, and adjusting the pose based on the reprojection error.

17. "Magic Leap Patent | Systems and methods for sign language recognition" describes a method for recognizing and interpreting sign language using a mixed reality device. The system can recognize and translate sign language gestures and present the translated information to the user of the mixed reality device.

18. "Magic Leap Patent | Projector architecture incorporating artifact mitigation" describes a projector architecture for a head-mounted display. The architecture includes a substrate with a set of filters, each capable of transmitting a different wavelength range, and waveguides to guide light to the user's eyes.

19. "Magic Leap Patent | Near-field audio rendering" describes a method for rendering audio with near-field effects using a mixed reality device. The method involves determining the source position of an audio signal, determining the virtual speaker positions, determining the head-related transfer function (HRTF) for each virtual speaker position and ear, and applying the HRTF to the audio signal for each ear.

20. "Magic Leap Patent | Dynamic browser stage" describes a method for displaying 3D content in a spatial environment. The method involves receiving a request to display 3D content at a specific position in the spatial environment, determining if the position is within an authorized area, expanding the authorized area based on user adjustments, and displaying the 3D content within the expanded authorized area.

21. "Magic Leap Patent | Gaze timer based augmentation of functionality of a user input device" describes a method for temporarily modifying the functionality of a handheld controller or other user input device based on the user's gaze. For example, the functionality of specific buttons on the controller may be modified when the user looks at the controller.

22. "Magic Leap Patent | Matching meshes for virtual avatars" describes a method for matching the base mesh of a virtual avatar with a target mesh. The method automatically matches the base mesh with the target mesh by using rigid transformations in high-error areas and non-rigid deformations in low-error areas.

23. "HTC Patent | Head-mounted display and method for image processing based on diopter adjustment" describes a head-mounted display and a method for processing images based on diopter adjustment. The method includes receiving a command indicating a diopter setting and rendering an image based on the diopter setting.

These summaries are a brief overview of the patents mentioned, providing a general understanding of their subject matter.